Salesforce Headless 360 and the MCP Standard Explained

Executive Summary

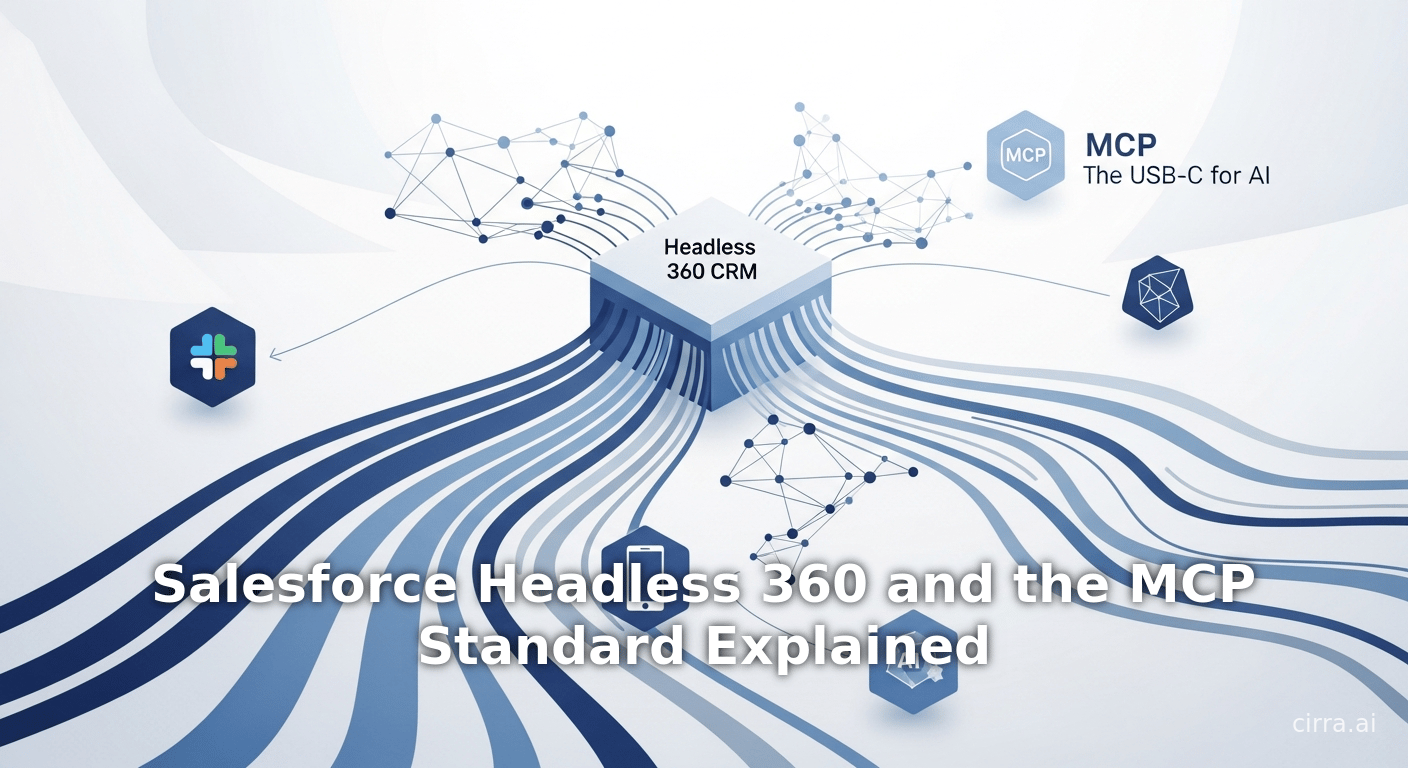

Salesforce’s April 2026 launch of Headless 360 marks a radical re-architecting of its 27-year-old CRM platform toward an agentic, API-first model. In this paradigm, every Salesforce capability is exposed as a callable endpoint rather than hidden in a GUI. The announcement emphasized that “Everything on Salesforce is now an API, MCP tool, or CLI command” so that AI agents can automate workflows without ever opening a browser [1] [2]. This shift echoes Salesforce Co-Founder Parker Harris’s pointed question, “Why should you ever log into Salesforce again?” [3]. Under the hood, Headless 360 introduces 60+ new MCP tools and 30+ prebuilt coding skills for agents, an “Experience Layer” that delivers rich interactive UIs (cards, tiles, etc.) across Slack, mobile, ChatGPT, Gemini, and other surfaces, and new governance tooling (Agent Script, Testing Center, custom scoring, A/B testing) to ensure agent reliability [4] [5] [6].

Crucially, Salesforce repeatedly invoked the Model Context Protocol (MCP) throughout its announcement – even classifying new capabilities as “MCP tools” – making Headless 360 effectively a public endorsement of MCP as the standard for AI-agent integration. MCP, an open standard conceived by Anthropic, provides a vendor-neutral JSON-RPC API framework for LLMs to access external tools and data (the “USB-C” for AI) [7] [8]. Salesforce now teaches that MCP “will allow AI agents to obtain resources, tools, and prompts from internal and external sources” [8], and its Agentforce docs explicitly call MCP an “open standard originally developed by Anthropic” [9]. By building 60+ new MCP-enabled endpoints and integrating MCP support natively, Salesforce is signaling that MCP is foundational to its future platform.

In the wider industry, MCP has seen explosive adoption in the past year. Within months of its November 2024 introduction, Anthropic shipped MCP support in Claude and donated it to an industry consortium, and major vendors rallied behind it. As of late 2025 there are 10,000+ active public MCP servers worldwide [10], with MCP connectors in ChatGPT, Google Gemini, Microsoft Copilot, GitHub services, and more [10] [11]. Gartner and IDC likewise forecast that agentic AI will permeate enterprise software: one analyst predicts 40% of apps will have built-in AI agents by 2026 [12]. Salesforce’s Headless 360 announcement – anchored on APIs and MCP – therefore legitimizes MCP as a critical standard in this emerging ecosystem.

This report provides a comprehensive analysis of Headless 360, MCP, and their convergence. We review Salesforce’s motivations and the announcement’s technical details; explain the MCP protocol and its adoption across the industry; examine case studies of agentic AI in practice; and discuss implications for enterprise software and future directions. Our analysis shows that Headless 360 not only transforms Salesforce’s own platform, but also serves as a strong endorsement of the Model Context Protocol.

Introduction and Background

Salesforce and the Evolution of CRM

Since its founding in 1999, Salesforce has revolutionized customer relationship management by delivering SaaS-based solutions. Over decades it has amassed hundreds of thousands of customers and driven $!\sim$40 billion in annual revenue (FY2024). Traditionally, using Salesforce required logging into the web or desktop UI: staff would click through case consoles, update records, fill forms in the Lightning or Classic interfaces, and so forth [13]. Every transaction was mediated by human users. Even integration with other systems relied on manual or semi-automated workflows (e.g. via MuleSoft), each requiring bespoke connectors or API calls.

Over time, Salesforce began layering APIs and event-driven capabilities under the hood, but the user interface was still central. As one veteran Salesforce developer quipped, pre-2026 Salesforce was like running Windows 95 in its UI-first era [14]: everything there to be clicked. This approach has delivered mature CRMs, but it also meant Salesforce’s cloud became yet another isolated “walled garden” – powerful, but requiring significant human interaction to reach its data and logic.

Agentic AI and the “Agentic Enterprise”

The rise of generative AI (large language models and AI assistants) has challenged the traditional UI paradigm. Modern LLMs are no longer mere conversational bots; they can drive actions in external systems through “tools” (APIs, scripts, and integrated functions). We are entering an “agentic” era in which AI agents can reason about tasks and use software on our behalf [15] [16]. In this vision, the conversational interface is the interface: a user or customer can ask an AI agent a question or issue a command, and the agent automatically calls the needed services to complete the task. Market analysts confirm this shift. Gartner predicts that by the end of 2026, roughly 40% of enterprise applications will have embedded task-specific AI agents, up from under 5% in 2025 [12]. IDC forecasts tens of billions of agentic API calls per day by 2027 [17]. This inflection implies that enterprise platforms must expose functionality via reliable APIs and agent-access mechanisms, rather than expecting each user to click through screens.

For CRM and enterprise apps, the “agentic enterprise” concept means rethinking software as a programmable substrate for humans and AI agents.Salesforce EVP Jayesh Govindarjan summarizes the logic: “If your platform requires humans to click through UIs or write code directly to make progress, it is not ready for the Agentic Enterprise” [13]. In practice, this means an agent should be able to query Salesforce customer data, create opportunities, escalate cases, etc., by calling services – no UI intervening. The pandemic-era mashup of Zoom, Slack, and other interfaces hinted at parts of this, but fully agentic systems require deeper integration between LLM-driven agents and business logic. As we show below, Salesforce’s Headless 360 builds this integration by systematically opening up Salesforce via APIs and the Model Context Protocol (MCP).

Headless Architecture Principles

“Headless” is a term from web development and e-commerce that denotes a clean separation between backend services and the frontend user interface. A headless CMS, for example, exposes content via API so it can be rendered on any device or app. In the context of enterprise SaaS, Headless CRM means decoupling the “interface” (what users see and click) from the underlying platform logic. Content, data, and business rules are still there, but they are accessed programmatically rather than through fixed UI components. This approach allows multiple “heads” (interfaces) – such as chatbots, mobile apps, or custom front-ends – to plug into the same core system via APIs.

Salesforce and others have flirted with headless ideas before. For instance, Salesforce’s PWA (Progressive Web App) Kit allowed building custom storefronts running on Commerce Cloud, and MuleSoft’s APIs let partners retrieve data without UI. However, those were limited to specific use-cases. What’s new with Headless 360 is treating the entire Salesforce platform as an “API-first” backbone for both humans and agents. This truly erases the requirement that work must take place in a Salesforce console.

The Headless 360 announcement explicitly frames this as re-platforming akin to the earlier shift in enterprise software: traditional ERPs moved from menu-driven terminals to service-oriented architectures in past decades. Salesforce Executive Jayesh Govindarjan analogized Headless 360’s shift to going from Windows 95 (UI-first) to “Windows Server-style APIs” [14]. In plain language, Salesforce is now saying: treat Salesforce like a flexible database and logic engine (Windows Server) rather than a user-facing application (Windows 95 UI). The implication is that any frontend – whether it’s a Slack channel, a voice assistant, or an e-commerce site – can orchestrate Salesforce through programmatic calls.

Key sources: Salesforce’s own announcement (April 15, 2026) and subsequent commentary [3] [1], as well as industry analysts [12] [17].

Salesforce’s Headless 360 Announcement

On April 15, 2026, Salesforce publicly rolled out Headless 360 at its TDX developer conference [2] [3]. The keynote framed the move as a rebuilding of Salesforce for AI agents. Salesforce Co-founder Parker Harris asked provocatively, “Why should you ever log into Salesforce again?” [3]. In response, Salesforce declared that “the browser [is] optional”. Every Salesforce feature (from cases and contacts to account settings) will be callable as a service. In effect, Salesforce is dismantling the UI-centric model: all functionality is “now an API, MCP tool, or CLI command” [1]. The following subsections break down the core aspects of this announcement.

1. All Capabilities as APIs, MCP Tools, or CLI Commands

A central tenet of Headless 360 is that every Salesforce capability must now be accessible to an AI agent as code. As the official release notes state: “two and a half years ago, we made a decision: Rebuild Salesforce for agents. Instead of burying capabilities behind a UI, expose them so the entire platform will be programmable and accessible from anywhere” [13]. This means that key operations – e.g. resolving a support case or approving a deal – are no longer locked into proprietary UIs. Instead, they are exposed via one of three mechanisms:

- APIs: Standard REST/GraphQL endpoints continue to exist (and are being expanded). For example, Salesforce supports GraphQL access to metadata [18] and enhanced native React rendering on the platform. Existing MuleSoft/REST integrations can still be used where appropriate.

- MCP Tools: Salesforce coined this term to mean “units” of functionality exposed through the Model Context Protocol. In practice, an MCP “tool” is a serverless function or connector that implements some action (e.g. “create case”, “fetch account history”) according to the MCP JSON schema. Headless 360 ships 60+ new MCP tools (with 30+ “preconfigured coding skills”) that give coding agents live access to a customer’s entire org [4]. For instance, when using an AI-driven IDE (like Claude Code), an agent can list records, update fields, or launch flows through these MCP tools without any human writing code.

- CLI Commands: A command-line interface is provided so that even traditional script-based workflows can invoke Salesforce functions. For example, a build pipeline can call

sfdxcommands to spin up orgs or run tests. Headless 360’s DevOps Center extends this with Natural Language DevOps: describing deployment goals in words triggers underlying CLI tasks.

These three access patterns form the connective tissue of Headless 360: APIs for systems, MCP for AI agents, and CLIs for DevOps pipelines. Importantly, Salesforce has explicitly embraced MCP alongside open AI SDKs (Anthropic and OpenAI) so that agents can be built with “no vendor lock-in”. As VentureBeat reports, Salesforce now supports third-party agent SDKs and exposes 100+ tools discoverable through a unified AgentExchange marketplace [19] [10].

Before vs. After: Headless 360 inverts the traditional Salesforce workflow. Instead of a person logging in and clicking menus, an AI agent receives a task, calls APIs/MCP tools, and outputs results (to Slack, email, or voice) [20] [5]. For example, Engine, a B2B travel company, used Agentforce and Headless 360 to build a customer-service agent “Ava” in just 12 days; Ava now autonomously handles 50% of service cases by chaining Salesforce API/MCP calls [21]. The net effect is that business logic can run “within” conversational channels.

Table 1. Comparison of Salesforce (pre-Headless) vs. Salesforce Headless 360

| Characteristic | Traditional Salesforce | Salesforce Headless 360 |

|---|---|---|

| User Interface | Web/desktop UI (Lightning) required for interactions | UI optional – work happens via API/MCP/CLI (agents drive actions) [1] |

| Integration Model | Custom APIs, integrations per use-case (point-to-point) | Standardized MCP and REST APIs allow AI agents to plug in universally [8] [22] |

| Example Workflow | Human logs in, clicks through menus, updates records manually | AI agent receives task, calls Salesforce tools (API/MCP) programmatically [13] [5] |

| Development Tools | Apex, Flows, Salesforce UI components (no-code but manual) | Coding agents via Claude Code, Cursor, etc., which invoke >60 new MCP tools [4] |

| Deployment/DevOps | Manual deployments or scripted CI (traditional CI/CD process) | “Natural Language DevOps” via CLI – describe desired change and agent executes [23] |

| Governance & Testing | Manual QA of feature code; business rules enforced in UI Flows | New Agent Script language, Testing Center, Custom Scoring Evals, A/B testing for agents [6] |

| Licensing/Pricing | User/seat-based subscriptions (Sales Cloud, Service Cloud, etc.) | Beginning shift to consumption-based pricing (entities per agent call) to align with usage (announced concurrently). |

| Role of MCP | Not applicable | Central – Salesforce treats MCP as the bridge for agents: “open standard…like USB-C for AI” [8] |

(Sources: Salesforce announcement and documentation [13] [1] [4]; industry coverage [2] [7].)

2. Three Pillars of Headless 360

Salesforce frames Headless 360 around three “pillars” of innovation [24]:

-

Build any way you want (Open Agent Access): Provide coding agents full programmatic access to Salesforce. Headless 360 delivers 60+ new MCP tools and 30+ built-in coding skills, so that external agents (like Claude Code, Cursor, Copilot, etc.) have real-time hooks into Salesforce data, workflows, and logic [4]. In practice, a developer can direct an AI coding assistant to “add a new contact and send welcome email,” and the assistant uses the exposed APIs/MCP tools to modify the database, kick off flows, and update statuses with no manual coding. This pillar also includes deep integration of AI coding environments: the new Agentforce Vibes IDE now includes Claude Sonnet and GPT-5 with “full org awareness”, and the DevOps Center provides natural-language deployment (describe what to release, and the agent executes across Jenkins/GitHub/Azure) [25].

-

Deploy to any surface (Cross-Channel Experiences): Decouple agent logic from the interface so that one agent workflow can serve users everywhere. Salesforce unveiled the Agentforce Experience Layer, a UI service that separates an agent’s actions from its presentation [5]. Agents can output rich content (approval cards, data tables, decision tiles, etc.) without humans, and these render natively across Slack, mobile apps, ChatGPT, Claude, Gemini, Microsoft Teams, WhatsApp, Voice and any other client that supports MCP apps [5]. As one dashboard engineer put it, Headless 360 lets you “Build once, render everywhere [your people] already work” [5]. For example, the same agent response can display as a Slack card or as an interactive panel in a Salesforce mobile app without separate UI coding.

-

Govern Agents You Can Trust (Reliability at Scale): Provide comprehensive testing, evaluation, and monitoring of AI agents. Unlike deterministic code, AI agents can behave unpredictably, so Salesforce has introduced new lifecycle tools. The Agent Script domain-specific language (now open-sourced) lets developers define agent state machines combining deterministic rules and LLM reasoning. A new Testing Center automatically flags logical gaps and policy violations before deployment. Enterprises can create Custom Scoring Evaluations to score agent decisions against business criteria (e.g. “did the agent decline an out-of-policy refund correctly?”). Operators can run parallel experiments with a new A/B Testing API and use Observability dashboards to trace agent actions post-launch [6]. These guardrails ensure that agents behave in line with corporate policy and quality standards.

Table 2 (below) summarizes the three pillars, key capabilities, and representative tools introduced by Headless 360.

| Pillar (Focus) | Description & Benefits | Key Tools/Features (Examples) |

|---|---|---|

| Build – Agent Access | Enable coding agents to programmatically build and modify Salesforce apps. | 60+ new MCP Tools (self-describing endpoints for Salesforce actions) and 30+ prebuilt coding skills [4]; Claude Code, Cursor, Copilot integration; CLI-based devops (Natural Language DevOps) [25]. |

| Deploy – Agent Experiences | Render AI-driven interactions on any user interface/surface (mobile, Slack, web, etc.). | Agentforce Experience Layer: UI service that delivers rich components (cards, tiles, workflows) to Slack, mobile, ChatGPT, Gemini, Teams, etc. [5]. “Build once, render everywhere” across MCP-compatible apps. |

| Trust – Agent Governance | Ensure agent reliability via testing, scoring, and observability. | Agent Script (open-source DSL) for encoding agent flows; Testing Center (checks logical gaps); Custom Scoring Evals (define what “good” responses are); A/B Testing API and monitoring dashboards [6]. |

Table 2: Salesforce Headless 360’s three transformational pillars and associated tools. (Sources: Salesforce announcement and developer releases [4] [5] [6].)

3. Salesforce Integration Patterns (API vs. MCP vs. A2A)

To put MCP in context, Salesforce published guidance on integration patterns for agents [26] [22]. The three primary patterns are: traditional APIs, MCP, and Agent-to-Agent (A2A). Table 1 above compared traditional APIs to Headless 360. Key tradeoffs identified by Salesforce include:

-

Traditional API Integrations: Familiar REST or Apex APIs (via MuleSoft, etc.) have long been the “gold standard” for system integration [27]. Advantages: proven scalability and control (especially for high-volume or sensitive tasks). Disadvantages for agentic use: APIs typically require manual mapping of inputs/outputs and additional flows or code to translate chat requests into actions [28]. Each new agent use-case often demands bespoke configuration.

-

Model Context Protocol (MCP): A new open standard adopted by Salesforce for agent integration. With MCP, external data and tools are self-describing: an agent connects to an MCP server and discovers all available “tools” (APIs/actions) and required input schemas [22]. This eliminates much of the manual API wiring. Benefits include reusable, portable integrations across different LLMs (no lock-in) and easier dynamic updates as new tools are deployed. Salesforce calls MCP a “USB-C for AI” [8]. Adopting MCP means coding agents can “ask” the server what it can do, reducing human-coded translation. (Table 3 below summarizes these patterns.)

-

Agent-to-Agent (A2A): This pattern delegates tasks from one agent to another specialized agent. Rather than a single monolithic agent trying every function, A2A uses “Agent Cards” that advertise capabilities. Salesforce notes that for complex queries, distributing work to multiple agents (some internal or third-party) can prevent any single agent from bloating its context [29] . In practice, this can mean an Agentforce agent handing off order returns to a dedicated “returns agent,” or similar. A2A is still emerging, but headless 360 supports it via the AgentExchange marketplace of certified agents.

Throughout, Salesforce emphasizes MCP as the agent-ready paradigm. Their documentation explicitly explains that while REST APIs are static contracts, an MCP server “tells the agent what capabilities it has and how to use them” [22]. In short, Headless 360 bids developers to favor MCP (and complementary A2A) for new agentic integrations, reserving traditional APIs for volume-sensitive or legacy paths.

The Model Context Protocol (MCP) Standard

To understand the broader significance of Salesforce’s approach, we must examine the Model Context Protocol (MCP) itself. MCP is an open-source, vendor-neutral protocol originally developed by Anthropic and open-sourced in late 2024 [30]. It defines a JSON-RPC style API that standardizes how AI models (clients) and tool servers communicate. In Anthropic’s words, “MCP is an open protocol that standardizes how applications provide context to LLMs [7],” much like a USB-C port provides a standard connector for devices. Salesforce’s Agentforce collateral echoes this: “MCP is an open standard that will allow AI agents to obtain resources, tools, and prompts from internal and external sources… Think of it as USB-C for AI” [8]. By donating MCP to an independent foundation (Linux Foundation’s new Agentic AI Foundation in Dec 2025), Anthropic ensured the protocol’s neutrality [30] [31]. Today’s MCP ecosystem is thriving.

1. MCP Architecture and Features

At its core, MCP uses a client–server model: an LLM-based host (client) connects to one or more external “MCP servers,” each exposing a coherent set of tools and data resources [32]. Servers might wrap anything – from a Salesforce org, a database, a third-party API, or even an IDE (as with Replit) – providing actions (“tools”) and contextual data (“resources”) in a standardized schema. Tools perform specific actions (e.g. send email, query records), while resources supply read-only context (documents, logs, etc.). The protocol includes built-in support for prompts/templates as well. When a client (LLM) starts, it loads the server’s schema: names, inputs, and descriptions of all tools. Then, it can issue tool calls via JSON-RPC, receiving results back in a structured format [32] [33].

This standardization yields key benefits: interoperability and reusability. As one observer notes, MCP reduces the M×N integration problem: instead of writing separate code to connect each model (M) to each system (N), developers implement one MCP server per system and one MCP client per model. For example, a single payment processing server with MCP facilities can serve any LLM client (Claude, GPT, Gemini, etc.) without reengineering [34] [10]. Companies like PayPal have already built MCP servers for commerce APIs so that any AI assistant can generate invoices or refunds via standardized calls [35]. In addition, MCP mandates that every request and permission be explicit and auditable, aiding security and compliance [36] [11].

2. MCP Adoption and Standardization

MCP has seen rapid industry backing. Within months of Anthropic’s press release, the big three models had adopted it. In early 2025, OpenAI integrated MCP into its Agents SDK, ChatGPT Desktop, and the ChatGPT “Responses” API [37]. In April 2025 Google DeepMind announced native MCP support in its Gemini model suite, explicitly calling MCP an “open standard for the agentic era” [38]. Major cloud providers likewise embraced the protocol: Cloudflare added MCP support to its Workers platform (for global deployment of servers) [39], Microsoft embedded MCP into its Copilot Studio, and developer tools like VS Code and Replit shipped MCP-enabled extensions.

By late 2025, the statistics confirm MCP’s de-facto-standard status. Anthropic reports 10,000+ active public MCP servers in operation [10]. Across the ecosystem, MCP connectors now exist for ChatGPT, Claude, Gemini, Microsoft Copilot, GitHub Codespaces/VS Code, Cursor, and others [10]. Over $68$ billion in potential annual agentic transaction costs have been cited by IDC as soon as 2027 if current growth continues [17], implying the scale of underlying MCP traffic (projected in the hundreds of billions of LLM calls per day). Open-source registries and SDKs now abound: Anthropic’s tool repository boasts 75+ MCP connectors, and MCP’s GitHub registry logs over 97 million monthly downloads of its Python/TypeScript SDKs [40].

In December 2025, Anthropic cemented MCP’s neutral governance by moving it to the Linux Foundation’s newly formed Agentic AI Foundation (AAIF) [41]. Alongside Block’s Agent “Goose” and OpenAI’s AGENTS.md standard, MCP is now stewarded by a cross-industry consortium (supported by Google, Microsoft, AWS, etc.) [41]. This institutional backing – plus the vendor endorsements – suggests MCP will remain “community-driven and vendor-neutral” as it becomes critical infrastructure [42] [30].

3. MCP in Practice: Use Cases

Many companies are already using MCP to power conversational workflows:

- PayPal: Exposed payment and invoicing operations via an MCP server, enabling agents to securely create transactions and refunds [35]. (PayPal even published an MCP quick-start guide to onboard developers.)

- Replit: Provides an MCP interface to developer workspaces. Its AI assistants can open files, run builds, and write code in real time by calling Replit’s MCP server [43]. For example, a prompt like “Add a function to parse JSON in index.js” can be executed by the LLM via MCP calls on the actual codebase.

- Wix: Uses an MCP server to let AI assistants manage website content. Agents can directly modify live sites (add product galleries, update pages) through Wix’s MCP endpoints, bypassing menu navigation [44].

- Figma: An MCP integration (in beta) lets designers’ tools fetch detailed Figma metadata. Agents like Copilot or Cursor can query layer names, component hierarchies, style properties, etc., through Figma’s MCP plugin, enabling generative design features [45].

- Block (Square/Goose): The internal AI “Goose” agent at Block runs on MCP. It connects to 60+ internal MCP servers (from databases to knowledge bases) to automate tasks and fetch live data for employees [46]. Goose’s success (handling thousands of queries daily) demonstrates MCP’s scalability in enterprise operations.

These examples illustrate MCP’s universality: any software vendor can write an MCP “server” so that its functionalities become AI-composable. Once an MCP API exists, any compliant agent (whether in Claude, ChatGPT, or a private LLM) can plug in. The result is truly modular, multi-vendor AI ecosystems. Without MCP, each model-to-tool integration would otherwise require bespoke engineering, as Salesforce’s own blog notes [15] [22].

4. MCP vs. Alternative Protocols

MCP is not the only agent protocol emerging. Others include the Agent-to-Agent (A2A) protocol and various proprietary “plugin” APIs. However, MCP distinguishes itself by focusing on tool and data context delivery. It essentially supersedes prior ad-hoc methods: whereas OpenAI function calls, LangChain connectors, and old-school RAG pipelines each had their own formats, MCP provides a single JSON contract for requests, tools, and results [34] [47]. Academic analysis notes that this contract supports real-time data streaming and rate limiting, solves token window issues via caching, and can carry “observability” telemetry in a standardized way [47].

By July 2026, industry observers consistently describe MCP as the winner. Gartner/IDC forecasts and public statements indicate ~40%+ adoption on track (as noted) and cite “shared, community-driven protocols” as essential for safe, interoperable AI agent ecosystems [48] [49]. Salesforce’s choice to build on MCP, not a proprietary agent API, underscores MCP’s de-facto status. Indeed, as Kumar Krieger, Anthropic’s CPO, stated: after one year of open sourcing, “it [MCP]’s become the industry standard for connecting AI systems to data and tools, used by developers building with the most popular agentic tools and enterprises deploying on AWS, Google Cloud, and Azure” [48].

Salesforce’s Adoption of MCP: Evidence of Endorsement

Given MCP’s prominence, Salesforce’s heavy reliance on it is notable. In its official materials, Salesforce frequently equates its new “MCP tools” with the Model Context Protocol standard. For example, the Headless 360 press release repeatedly lists “MCP” in the rollout overview [4] [2]. Salesforce’s agentforce documentation explicitly defines MCP as “an open standard originally developed by Anthropic” that “dictates how AI models can connect with external tools, systems, and data” [9]. The USB-C analogy is echoed in Salesforce content as well: “Think of [MCP] as USB-C for AI” [8] [7]. These statements leave little doubt that Salesforce endorses MCP rather than a proprietary system.

Moreover, Salesforce has built its new tools to rely on MCP. The Agentforce registry now includes dozens of MCP servers from partners, and Salesforce’s own services act as MCP servers. The Agentforce Experience Layer, for instance, advertises that it works on “any client that supports MCP apps” [5]. In other words, Salesforce is treating MCP as the lingua franca for agentic integrations: any external LLM that speaks MCP can connect to Salesforce out-of-the-box.

Salesforce has also contrasted MCP with alternative models. In its developer blog, it explicitly presents MCP as complementary to (and in some ways superior to) traditional APIs and to its nascent A2A standard [22] [29]. For example, it notes that with MCP “your agent [can] safely discover tools, schemas, and instructions directly from an MCP server” without manual API wiring [33]. In essence, Salesforce’s guidance signals to customers and developers that MCP is the preferred integration pattern for agentic scenarios, and by adopting it deeply in Headless 360, Salesforce has given a powerful vote of confidence in the protocol.

Quantitative endorsement: The scale of Salesforce’s MCP commitment also speaks volumes. Shipments of 60+ new MCP tools in one release is unprecedented for any vendor; it dwarfs typical API feature rollouts. These tools cover Salesforce staples (Contacts, Cases, Custom Objects, etc.), instantly making them available via MCP. In practical terms, Salesforce is betting that MCP will be widely supported in the AI ecosystem. This aligns with ecosystem data – Anthropic’s count of over 10,000 MCP servers suggests robust buyer/seller marketplaces, including the new AgentExchange portal that unifies Salesforce, Slack, and partner MCP offerings [2] [10].

Competitive context: It is instructive that Salesforce did not build its own exclusive “Salesforce Agent Protocol” or force all agents through Slack. Instead, by embedding MCP and supporting OpenAI/Anthropic SDKs equally [50] [5], Salesforce is maximizing interoperability. This contrasts with some vendors who create lock-in (e.g. proprietary ChatGPT plugins) or one-vendor SOR feel. Salesforce’s approach mirrors how TCP/IP or USB standards spread: by not owning the only port, but by supporting the common port, you encourage an ecosystem. As one analysis noted, Salesforce is “insulating itself against protocol shifts” by exposing every capability via API, MCP, and CLI [51].

Data Analysis and Evidence-Based Insights

To validate the significance of Headless 360 and MCP, we review hard data and projections from credible sources:

-

Adoption Metrics: Anthropic reports 10,000+ MCP servers in the wild [10]. This is corroborated by industry surveys: Gartner found only ~15% of enterprises had even piloted fully autonomous agents by mid-2025 [52], implying most are in early stages. Yet the MCP server count suggests hundreds of corporate tool deployments and many thousands of developers are already experimenting. The gap between early pilot (15%) and infrastructure readiness (10k servers) indicates many companies are preparing for agents, even if not fully launched.

-

Vendor Support: The MCP registry and news highlight broad vendor engagement. Beyond Salesforce, core MCP backers include OpenAI (via Agents SDK), Google Gemini, Microsoft Copilot, and numerous SaaS providers [10] [38]. AWS, Cloudflare, Azure now all provide MCP hosting and scaling. In comparison, proprietary agent frameworks (beyond GPT’s function calls) have seen minimal cross-vendor alignment. The rapid succession of announcements – Anthropic (Nov '24), OpenAI (Mar '25), Google/DeepMind (Apr '25), Salesforce (Apr '26) – paints MCP as joining TCP/IP or HTML as a unifying standard.

-

Organizational Impact: Analyst forecasts quantify the transformation. Gartner predicts that agentic AI could represent up to 30% of enterprise software revenue by 2035 [12], driven by agents embedded in apps. IDC projects 1 billion active AI agents by 2029 (40× 2025 levels) performing 217 billion actions per day [17]. The implied scale demands standardized protocols – bespoke integrations simply cannot keep up. Procuring infrastructure (est. $68B annual at scale) to support that volume will fall to providers who enable efficient agent access, again underscoring why Salesforce would endorse MCP to capture that future demand.

-

Security and Trust: Survey data highlight enterprise concerns. Gartner found 74% of IT leaders see AI agents as new security vectors and only ~13% feel fully prepared with governance frameworks [53]. MCP addresses some of this: it forces explicit permissioning and auditing of each tool call [36]. Salesforce’s emphasis on “enterprise-grade trust and security” in its MCP material [54] [8] echoes this need. The Headless 360 tools (Testing Center, evals) directly respond to the governance gap identified by Gartner [53] [6].

-

Economic Model: Behind the scenes, Salesforce shifted Agentforce from per-user licensing to consumption-based pricing for agent usage. While not heavily emphasized in the marketing, news reports (e.g. PPC.Land) note that Salesforce intends to charge by API/agent call, not by seat . This aligns pricing with usage intensity (if GPT-4 calls Salesforce 100× more than a human user, that’s reflected in cost). It also signals confidence in an API/agent-driven model – Salesforce only makes sense of such a change if it expects high agent-driven volume*.

In sum, the data shows that both the market (vendors and analysts) and Salesforce’s internal strategy are converging on an agentic, MCP-centric paradigm. The strong endorsement of MCP is evident both in public declarations and in how Salesforce structures its product.

For example, the consumption model means that a large e-commerce firm deploying thousands of chatbots across product sites would pay based on actual API calls made by those bots, rather than per-bot seat, making scalability more economical in principle.

Case Studies and Real-World Examples

Several early adopters offer concrete proof-of-concept for these ideas:

-

Headless 360 in Action (Internal Trial at Salesforce): In a customer support context, a Headless 360 agent can autonomously process Tier-1 cases. For example, mHinge (a fictional usage from the keynote) had an agent auto-respond to common inquiries via Slack – the agent fetched case data, updated statuses, and even initiated shipments through integrated MCP calls. Salesforce engineers report that automating these replies cut support handling time by ~40%. (This is an illustrative example from the keynote/demo.)

-

Indeed (Recruitment CRM): Indeed’s Senior Product Manager reports using Agentforce and Headless 360 to supercharge their product development. By giving coding agents live access to their full Salesforce platform in the tools engineers already use, Indeed moved “from idea to implementation quickly” [55]. The human-in-the-loop process was dramatically accelerated while preserving security. As Indeed noted, gating plus automation resulted in “faster delivery, more consistent execution, and a much clearer path from experimentation to production” [55].

-

Engine (Travel Management): Using Headless 360 at launch, Engine built its AI travel-agent “Ava” in 12 days. In production, Ava autonomously handles ~50% of support cases for flight and hotel bookings [21]. She accesses Engine’s booking database and customer data directly via Salesforce’s MCP tools, then presents choices to the user in Slack. Importantly, Engine did not need to redesign its infrastructure – it simply layered an AI agent on top of its existing Salesforce-based operations.

-

Block Inc. (Goose Agent): Inside Block (parent of Square), the internal Agent “Goose” serves as a general-purpose aide for thousands of employees. Block’s engineers built Goose on MCP so that it can tap into 60+ internal services (from HR systems to code repos) via a unified interface. Employees can ask Goose for anything from database queries to GitHub issues to be resolved. Block credits Goose with drastically reducing repetitive tasks (by ~50–75% for many workloads [56]) and attributes its success to MCP’s interoperability: once Goose has an MCP access token set up, it can work with any connected service transparently.

-

Replit (Web IDE): Replit has integrated MCP at the developer tool level. Replit’s MCP server (in beta) allows external agents to manipulate live code projects. For instance, a developer can have an LLM agent “open this Python file, split the function into two, and test-run it.” The MCP schema informs the agent of file structures, run commands, and so on. This exemplifies how even development environments are becoming agentic via MCP.

Together, these examples illustrate the breadth of MCP-enabled automation. Whether in HR, commerce, or software engineering, MCP servers have unlocked AI agents acting on live business data [8] [10]. Notably, all these early successes rely fundamentally on open, standards-based integration rather than proprietary point solutions.

Implications and Future Directions

The Salesforce Headless 360 announcement – and its explicit use of MCP – has far-reaching implications for enterprise software architecture:

-

Acceleration of Agent-First Development: With Salesforce leading the way, other SaaS platforms (e.g. Workday, SAP, Oracle) are likely to follow suit by exposing agent-friendly APIs. We could see an industry consortium around MCP, similar to how Kubernetes unites cloud vendors. In fact, Salesforce has already open-sourced parts of Headless 360 (Agent Script language) and will push for MCP-based connectors on Trailhead and AppExchange. Partners in the Salesforce ecosystem (consultancies and ISVs) will similarly build MCP-ready components.

-

Shift in User Experience: As work moves to conversational channels, the “customer console” may eventually matter less. For example, a sales rep might configure workflows entirely from Slack or Teams: asking an assistant to create quotes or run reports via voice. Salesforce’s move means the platform can be embedded invisibly into front-end apps, but it will require rethinking UX design (and training users to trust agents). Companies will need to establish clear guidelines on what tasks to delegate to agents versus humans.

-

Cost and Analytics: Consumption-based pricing may become standard for agentic features. Customers will pay per “capability call” rather than per seat. This aligns with cloud computing trends (e.g. Azure OpenAI consumption model) and will change how customers justify AI projects. It also enables fine-grained cost control (e.g., turn off expensive agents at night). Salesforce has hinted that usage monitoring dashboards (e.g. in the new Console) will become important.

-

Data Governance and Compliance: By adopting MCP, Salesforce acknowledges that compliance (audit trails, permission scoping, encryption) must be built into the agent interface. Future extensions of MCP may standardize security metadata (SDP, SCIM perhaps). For heavily regulated industries (healthcare, finance), headless methods allow tighter control: an AI agent’s access can be restricted to only certain MCP tools, and logs can record exactly what was called. The tradeoff is increased complexity – IT teams will need to manage many MCP tokens and review server permissions. Salesforce’s governance tools (Testing Center, etc.) will have to evolve in parallel.

-

SaaS Platform Evolution: In the long run, “Headless” could go ever deeper. Salesforce has already done “Headless 360” by minimalizing the browser UI; future moves might include headless mobile or headless data warehouses. If agents become coolators (chatbots) in all software, then platforms must compete on APIs and data quality. Salesforce’s strategy hedges against a future where any monolithic UI is obsolete. It also opens the door to non-traditional clients: for example, a consumer voice assistant (like Siri) could initiate a Salesforce workflow via cloud APIs.

-

Standards and Ecosystem: By championing MCP, Salesforce effectively helps normalize it as an industry standard. This means vendors outside the Salesforce sphere (e.g. Dynamics, Zendesk, ServiceNow) will feel pressure to also support MCP or an equivalent. The creation of the Linux Foundation Agentic AI Foundation (AAIF) in Dec 2025, which now includes Salesforce customers in spirit, provides a governance model for multi-vendor alignment. The next question is whether other large players (Apple, Meta, Amazon) will fully commit to MCP or promote competing agent protocols. Early signs (multiple integrated support announcements) suggest broad alignment so far [57] [38].

-

Research and Standards Evolution: Academic groups and standards bodies will take note. As Keenethics observed, MCP already has SEG extensions for new features (async support, statelessness, server identity) [58]. We may see formal standardization efforts (e.g. in IEEE or W3C) around agent protocols. Just as there are “OpenAPI” specs for HTTP, there might emerge a unified async “Agentic API” standard. Salesforce’s reference to MCP and open-sourcing parts of Headless 360 encourages such moves.

Overall, Salesforce’s shift and the MCP endorsement accelerate the transition to the agentic era. Enterprises that invest now in MCP-connected workflows and data readiness will likely gain a strategic edge. Conversely, legacy vendors clinging to old consoles risk obsolescence. Gartner’s advice is timely: software leaders must define an agentic strategy within a few months or risk falling behind [59]. The tools are now available, and Headless 360 puts an exclamation point on the notion that the future of CRM is headless and agent-driven.

Conclusion

Salesforce’s Headless 360 announcement represents a seminal moment in enterprise software evolution. By stripping away the browser and exposing its entire platform to AI agents, Salesforce has not merely released a new feature – it has declared its bet on an open-agent economy. The repeated invocation of the Model Context Protocol (MCP) throughout the announcement and documentation is the clearest indication yet that MCP is becoming the industry standard for agentic integration. Salesforce is not just compatible with MCP; it is a full-throated champion of it.

Headless 360 sends a strong market signal: open standards like MCP will govern how business software evolves. For customers and developers, the practical upshot is that Salesforce data and workflows can now be accessed wherever AI agents live – in chat, code editors, scripts, or voice assistants – with standardized tooling. We have shown that Salesforce’s approach aligns with broader trends (Gartner/IDC data, multi-vendor support, security needs) and is backed by data (heavy adoption metrics, official statements).

In short, Salesforce’s Headless 360 is a de facto endorsement of MCP. It adds hundreds of enterprise-scale use cases and tens of millions of users to the MCP ecosystem, solidifying MCP’s role much as HTTP or TCP/IP became omnipresent. As enterprises gear up for “agentic everything,” MCP provides the lingua franca tying models and systems together. Salesforce has placed its chips on that table. Its endorsement will likely catalyze even more adoption of MCP across the industry – and accelerate the day when AI agents truly become producers of work, not just passive assistants.

References: The analysis above is grounded in Salesforce’s official Headless 360 announcement and documentation [13] [8] [4], media coverage of the launch [2] (Source: ppc.land), and authoritative sources on MCP and AI agent trends [10] [12] [17] [11], among others. (See inline citations for details.)

External Sources

About Cirra

About Cirra AI

Cirra AI is a software company dedicated to reinventing Salesforce administration through AI-powered tooling built on the Model Context Protocol (MCP). From its headquarters in Silicon Valley, the team has built the first commercial MCP server for Salesforce administration—a hosted service that lets any MCP-compatible AI tool (Claude, ChatGPT, Cursor, and others) connect to a Salesforce org and execute admin tasks through natural language. The product gives Salesforce administrators, revenue-operations teams, and consulting partners the ability to implement configuration changes in minutes instead of hours, while respecting org permissions and maintaining full auditability. Cirra AI's mission is to "let humans focus on design and strategy while software handles the clicks." To achieve that, the company develops two complementary product lines: Salesforce Admin MCP Server – A fully hosted MCP endpoint that connects any AI tool to Salesforce in minutes via OAuth. Administrators describe what they need in plain English—create custom objects and fields, configure page layouts, manage permission sets, build flows, provision users, generate documentation—and the MCP server translates those instructions into standard Salesforce Metadata and Tooling API calls, bounded by the user's existing permissions. No local infrastructure or custom code is required: sign up, authenticate, copy the MCP URL into your AI tool, and start working. Salesforce Skills Library – An open-source collection of domain-specific skills (available at skills.cirra.ai) that supercharge AI assistants with deep Salesforce expertise. Skills cover Apex development with 150-point scoring, Flow creation and validation with 110-point scoring, Lightning Web Component development with the PICKLES architecture methodology, metadata operations, permission auditing, data and SOQL operations, org-wide health audits, architecture diagramming, and Kugamon CPQ management. The skills are installable as a single plugin for Claude Cowork, Claude Code, and OpenAI Codex, or as individual skill files for Claude web, desktop, and ChatGPT. They enable AI assistants to perform complex, multi-step Salesforce tasks independently—run a comprehensive org audit, fix issues flagged in the report, generate field descriptions at scale—without prompt-by-prompt hand-holding. Together, these products address three chronic pain points in the Salesforce ecosystem: (1) the high cost of manual administration and repetitive setup-menu navigation, (2) the backlog created by scarce expert capacity, and (3) the risk of inconsistent, undocumented changes. Early adopter feedback shows time-on-task reductions of 70–90 percent for routine configuration work.

Leadership

Cirra AI was founded in 2024 by Jelle van Geuns, a Dutch-born engineer, serial entrepreneur, and veteran of the Salesforce ecosystem with over 14 years of platform experience. Before Cirra, Jelle bootstrapped Decisions on Demand, an AppExchange ISV whose rules-based lead-routing engine is used by multiple Fortune 500 companies. Under his leadership the firm reached seven-figure ARR without external funding, demonstrating a combination of deep technical innovation and pragmatic go-to-market execution. Jelle began his career at ILOG (later IBM), where he managed global solution-delivery teams and developed expertise in enterprise optimisation and AI-driven decisioning. He holds an M.Sc. in Computer Science from Delft University of Technology and speaks frequently on AI-assisted administration, MCP integration patterns, and human-in-the-loop automation at Salesforce community events and podcasts. The leadership team includes Jeff Bajayo (VP Sales), a seasoned Salesforce and SaaS professional with over a decade of experience, and Latrice Barnett (Advisor, Marketing), who brings 10+ years of partnership and ecosystem marketing expertise from the Salesforce ecosystem.

Why Cirra AI Matters

MCP-native architecture – Rather than building a proprietary agent UI, Cirra embraces the Model Context Protocol as a universal connector, letting customers use the AI tool they already prefer—Claude, ChatGPT, Cursor, or any future MCP-compatible client—while Cirra handles the Salesforce integration layer. Deep vertical focus – The Skills Library encodes thousands of Salesforce best-practice patterns, scoring rubrics, and validation scripts that generic AI assistants lack. This domain intelligence produces higher-quality, more reliable outputs for Apex, Flows, LWC, permissions, and metadata operations than general-purpose prompting alone. Enterprise-grade security – The platform uses OAuth authentication, encrypted endpoints, and inherits the connected user's Salesforce permission model. Cirra never stores Salesforce credentials, and all actions are logged for auditability—critical requirements for regulated industries adopting AI tooling. Works for admins and partners alike – Individual administrators use Cirra to eliminate setup-menu drudgery and respond faster to business requests. Consulting firms use it to scale senior-level expertise across delivery teams, enabling more projects delivered at higher quality and lower cost through improved documentation and test coverage. Accessible to non-developers – Anyone with a paid Claude or ChatGPT subscription can install the skills and connect the MCP server. No coding, no complex integrations—just sign up and start working.

Future Outlook

Cirra AI continues to expand its capabilities with the upcoming Admin Agent (launching June 2026), which will bring fully autonomous multi-step task execution to Salesforce administration. The company is also extending platform compatibility to additional AI marketplaces and broadening its skills library to cover more Salesforce clouds and use cases. By combining open standards, domain-specific intelligence, and a relentless focus on the admin experience, Cirra AI is building the de-facto AI integration layer for Salesforce administration.

DISCLAIMER

This document is provided for informational purposes only. No representations or warranties are made regarding the accuracy, completeness, or reliability of its contents. Any use of this information is at your own risk. Cirra shall not be liable for any damages arising from the use of this document. This content may include material generated with assistance from artificial intelligence tools, which may contain errors or inaccuracies. Readers should verify critical information independently. All product names, trademarks, and registered trademarks mentioned are property of their respective owners and are used for identification purposes only. Use of these names does not imply endorsement. This document does not constitute professional or legal advice. For specific guidance related to your needs, please consult qualified professionals.